Tesla wants to make driving a thing of the past, but it’s Autopilot system isn’t foolproof.

The company is once again being sued and this time the lawsuit comes from the family of Walter Huang who was killed when his Tesla Model X crashed in Mountain View, California on March 23rd, 2018.

According to the lawsuit, as the Model X approached a “paved gore area dividing the main travel lanes of US-101 from the SH-85 exit ramp, the autopilot feature of the Tesla turned the vehicle left, out of the designated travel lane, and drove it straight into a concrete highway median.”

Huang was pronounced dead several hours later and the lawsuit alleges Tesla was “negligent and careless in failing and omitting to provide adequate instructions and warnings to protect against injuries occurring as a result of vehicle malfunction and the absence of an effective automatic emergency braking system.”

In essence, the lawsuit alleges that Tesla overstated the benefits of its Autopilot technology and Huang believed the crossover was effectively crash proof. The suit also claims the Model X was defective and should have been equipped with systems that would have prevented the accident.

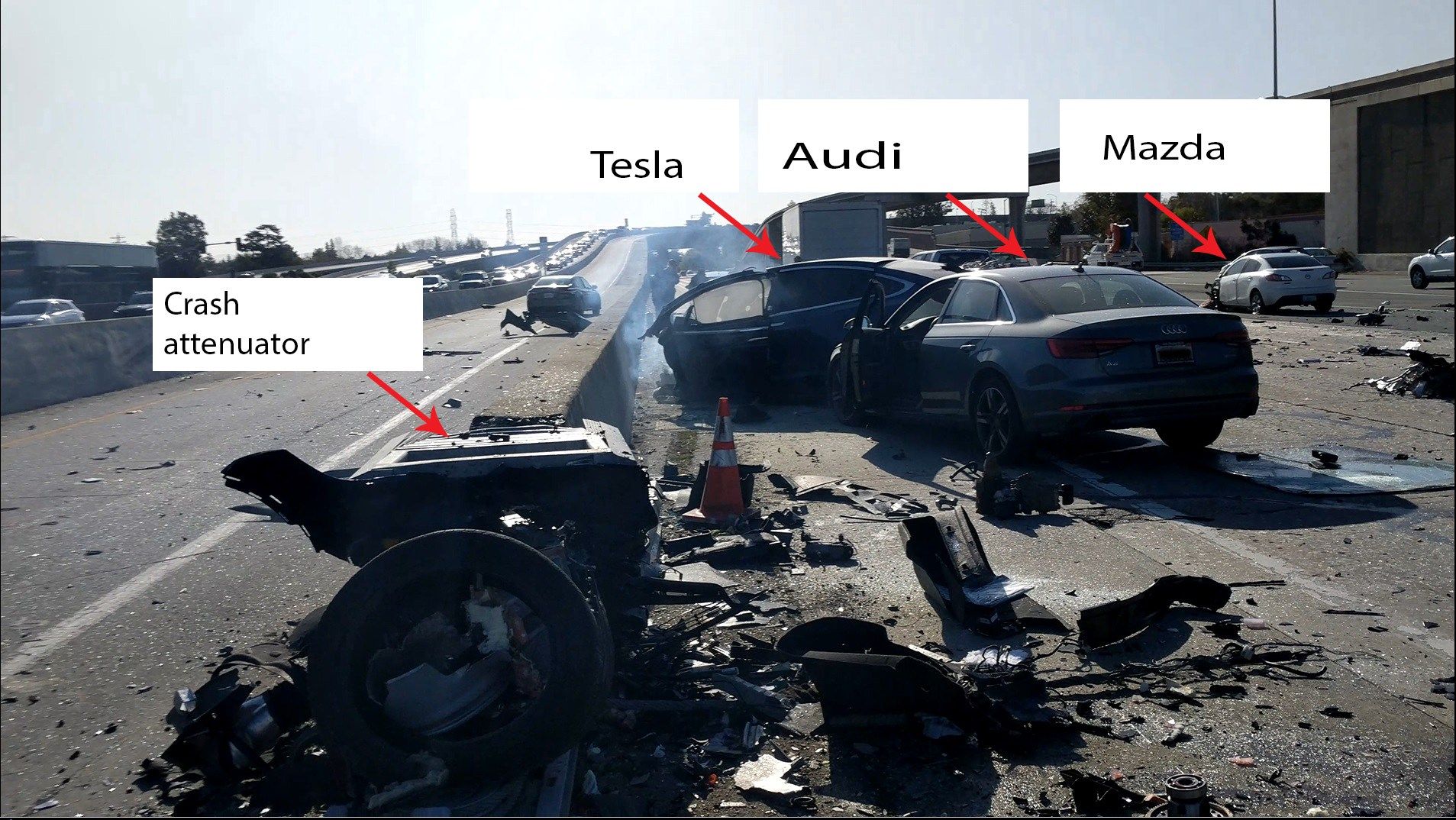

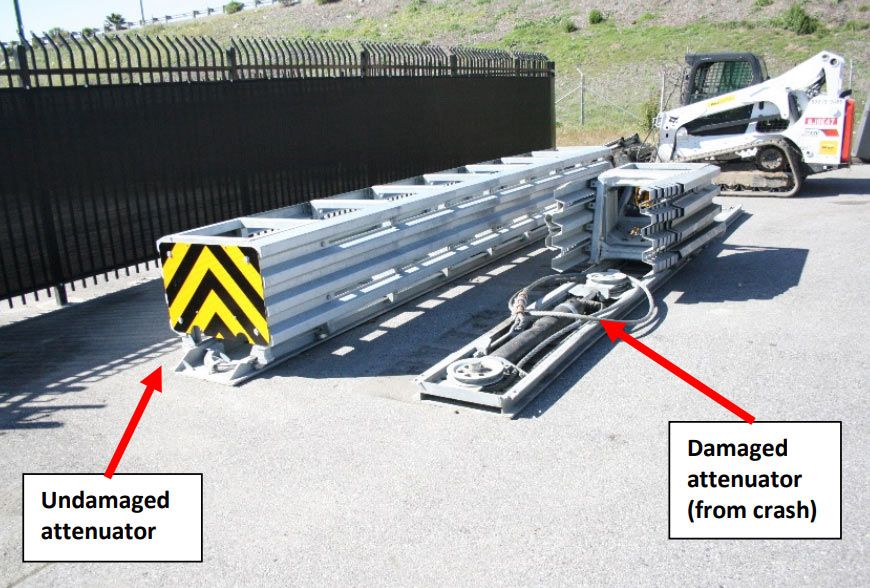

The National Transportation Safety Board looked into the crash and released its preliminary report on the accident last summer. According to the report, the Model X traveled through the gore area until it struck a crash attenuator – which was mounted on the end of a concrete barrier – at a speed of approximately 71 mph (114 km/h). After the impact, the crossover rotated counterclockwise and the front portion of the vehicle separated from the rest of the Model X.

Before the crash, the Model X provided two visual warnings and one audio alert for the driver to place their hands on the steering wheel. In the final minute before the collision, hands were detected on the steering wheel for just 34 seconds. In the final six seconds, no hands were detected on the wheel.

While Huang should have been paying attention and keeping his hands on the steering wheel, the NTSB suggested the Autopilot system did contribute to the crash. Eight seconds prior to impact, the Model X was following a lead vehicle at around 65 mph (104 km/h). One second later, the crossover “began a left steering movement” while still following the lead vehicle.

Four seconds before the crash, the Model X stopped following the lead vehicle and this caused the crossover to speed up as the cruise control system was set at 75 mph (120 km/h). The Model X then hit the barrier and there were no braking or evasive steering movements detected before the collision.

The lawsuit also includes the state of California over its failure to repair the crash attenuator on the concrete barrier. It was damaged by an accident involving a Toyota Prius on March 12th and the lawsuit says the failure to fix or replace the attenuator contributed to Huang’s death.