A self-driving Tesla Model S was involved in an accident that occurred on May 7, resulting in the death of the driver. While semi and fully-autonomous cars have crashed before, this is believed to be the first death related to a vehicle with a semi-autonomous driving feature engaged.

The crash happened last month in the small city of Williston in Florida, with Ohio resident Joshua Brown, 45, in the driver’s seat of his 2015 Model S with Autopilot activated, when an 18-wheel semi made a left turn in front of the electric car. Brown died at the scene when “the car’s roof struck the underside of the trailer as it passed under the trailer”, the Levy Journal reported.

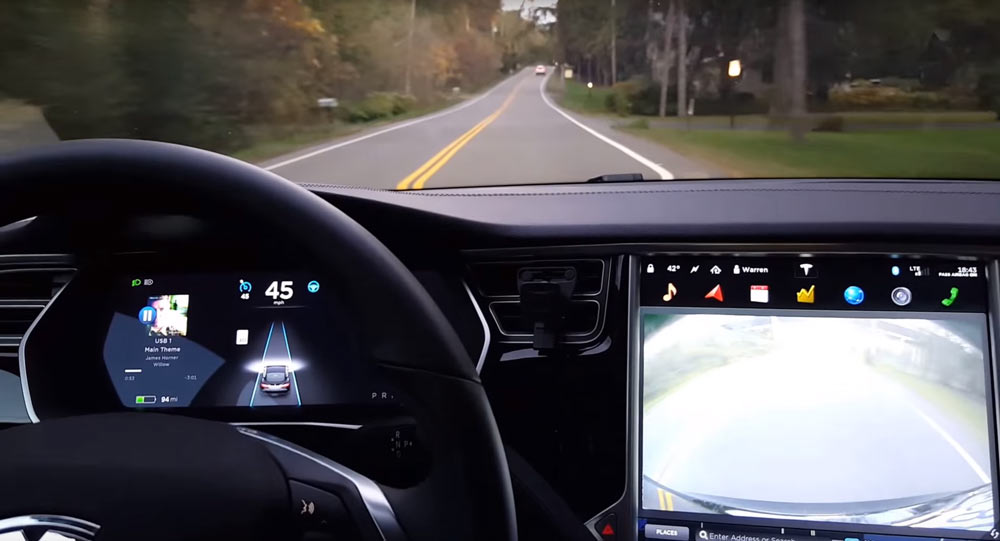

Brown was an active member of the Tesla community having uploaded many videos on his YouTube Channel, including ones showing the Autopilot system (see below), with one event capturing the attention of Tesla CEO, Elon Musk, who tweeted about it on his account.

In a brief statement, the National Highway Traffic Safety Administration (NHTSA) said Thursday it was aware of the accident and has launched a preliminary investigation sending a team to examine both the car and the crash site in Florida.

“ODI has identified, from information provided by Tesla and from other sources, a report of a fatal highway crash involving a 2015 Tesla Model S operating with automated driving systems (“Autopilot”) activated,” said the NHTSA. “This preliminary evaluation is being opened to examine the design and performance of any automated driving systems in use at the time of the crash.”

Tesla responded to the news with a blog post titled “A Tragic Loss” that starts off in the first paragraph by stating it was the first known Autopilot death in some 130 million miles driven by its customers, and continues saying that while the autonomous system is getting better, it’s not yet perfect and still requires the driver to remain alert. According to Tesla, “neither Autopilot nor the driver noticed the white side of the tractor trailer against a brightly lit sky, so the brake was not applied”.

Here’s Tesla’s statement in full:

“We learned yesterday evening that NHTSA is opening a preliminary evaluation into the performance of Autopilot during a recent fatal crash that occurred in a Model S. This is the first known fatality in just over 130 million miles where Autopilot was activated. Among all vehicles in the US, there is a fatality every 94 million miles. Worldwide, there is a fatality approximately every 60 million miles. It is important to emphasize that the NHTSA action is simply a preliminary evaluation to determine whether the system worked according to expectations.

Following our standard practice, Tesla informed NHTSA about the incident immediately after it occurred. What we know is that the vehicle was on a divided highway with Autopilot engaged when a tractor trailer drove across the highway perpendicular to the Model S. Neither Autopilot nor the driver noticed the white side of the tractor trailer against a brightly lit sky, so the brake was not applied. The high ride height of the trailer combined with its positioning across the road and the extremely rare circumstances of the impact caused the Model S to pass under the trailer, with the bottom of the trailer impacting the windshield of the Model S. Had the Model S impacted the front or rear of the trailer, even at high speed, its advanced crash safety system would likely have prevented serious injury as it has in numerous other similar incidents.

It is important to note that Tesla disables Autopilot by default and requires explicit acknowledgement that the system is new technology and still in a public beta phase before it can be enabled. When drivers activate Autopilot, the acknowledgment box explains, among other things, that Autopilot “is an assist feature that requires you to keep your hands on the steering wheel at all times,” and that “you need to maintain control and responsibility for your vehicle” while using it. Additionally, every time that Autopilot is engaged, the car reminds the driver to “Always keep your hands on the wheel. Be prepared to take over at any time.” The system also makes frequent checks to ensure that the driver’s hands remain on the wheel and provides visual and audible alerts if hands-on is not detected. It then gradually slows down the car until hands-on is detected again.

We do this to ensure that every time the feature is used, it is used as safely as possible. As more real-world miles accumulate and the software logic accounts for increasingly rare events, the probability of injury will keep decreasing. Autopilot is getting better all the time, but it is not perfect and still requires the driver to remain alert. Nonetheless, when used in conjunction with driver oversight, the data is unequivocal that Autopilot reduces driver workload and results in a statistically significant improvement in safety when compared to purely manual driving.

The customer who died in this crash had a loving family and we are beyond saddened by their loss. He was a friend to Tesla and the broader EV community, a person who spent his life focused on innovation and the promise of technology and who believed strongly in Tesla’s mission. We would like to extend our deepest sympathies to his family and friends.”

While there’s an ongoing investigation, the accident is bound to raise some legit questions about self-driving vehicles and whether autonomous systems (and today’s drivers) are ready for prime-time. Clarence Ditlow, executive director of the Center for Auto Safety, told Bloomberg that if the Autopilot system did not recognize the semi-truck, then Tesla must recall any vehicles equipped with this system.

“That’s a clear-cut defect and there should be a recall,” Ditlow said. “When you put Autopilot in a vehicle, you’re telling people to trust the system even if there is lawyerly warning to keep your hands on the wheel.”

Eric Noble, president of CarLab Inc., a consulting firm in Orange, California, had harsher words for Tesla, which said in its posting that Autopilot “is a new technology and still in public beta phase”. Noble told Bloomberg that “No other automaker sells unproven technology to customers”.

“There’s not an experienced automaker out there who will let this kind of technology on the road in the hands of consumers without further testing,” Noble told the news agency. “They will test it over millions of miles with trained drivers, not with consumers.”